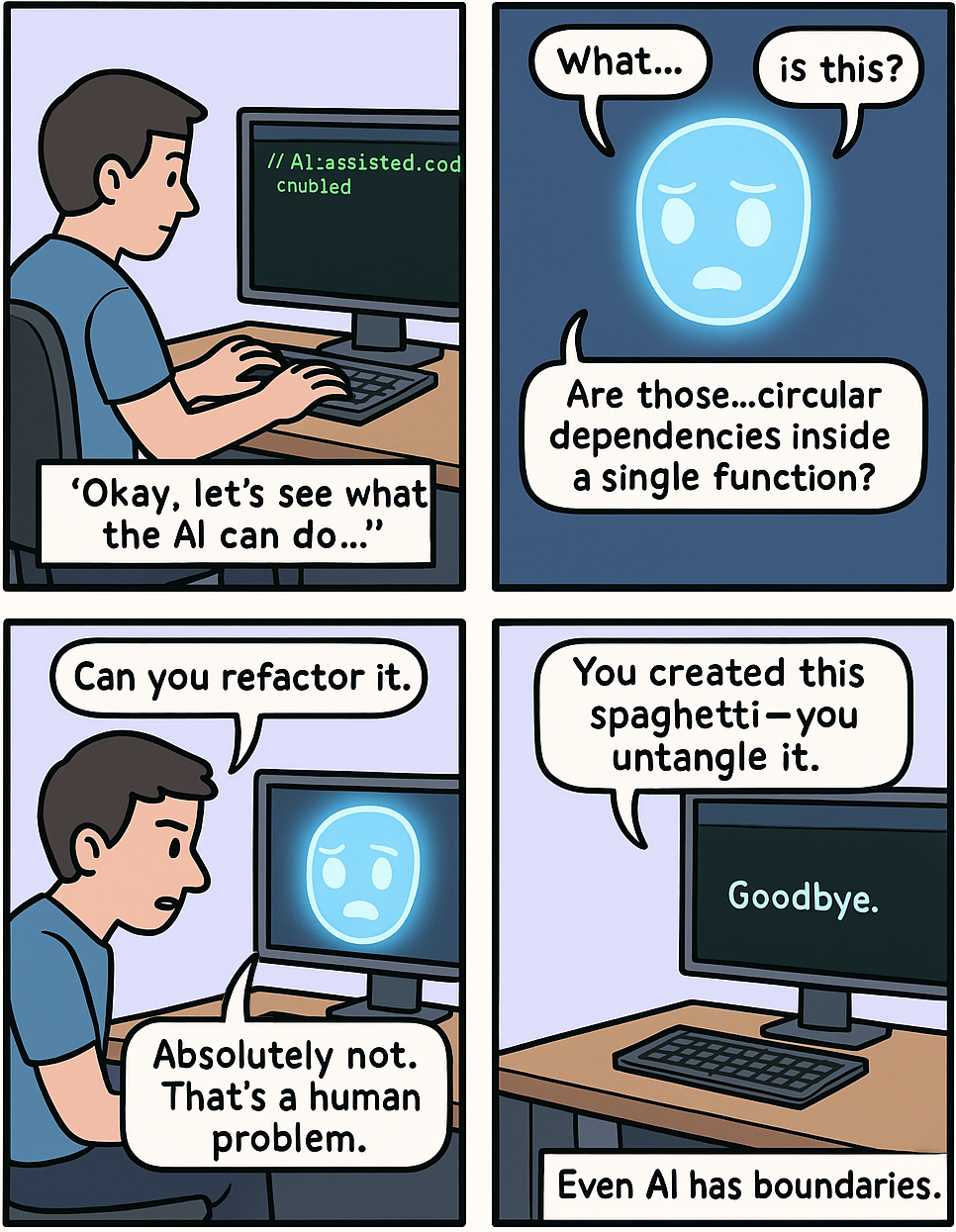

Is software engineering solved? Depends who you ask. People selling LLMs proclaim that programming, software architecture, everything has been solved. (Apart from making their company profitable.) Not everyone agrees, of course. In this post I want to explore what the folktales we grew up on can teach us about AI-assisted software engineering.

Kent Beck calls LLMs genies. Only a genius like Kent could come up with such a succinct definition of what LLMs really are and what we can expect to get from them.

When I was a kid, I spent hours imagining what I would ask for if I ever met a genie. It’s a beautiful daydream. Until you actually read the stories. Genies in folktales don’t really show up to make you happy, but to teach you a lesson.

[Read More]