I was recently asked this question on LinkedIn. My short answer: yes. But the more interesting question is why, and why AI might make them more relevant, not less.

Reframing the Question

Some say SOLID principles are outdated. They’re not. SOLID principles are guidelines for designing modular systems. They address forces that exist in software regardless of who (or what) produces the code. AI changes the author. It doesn’t change the physics of software complexity.

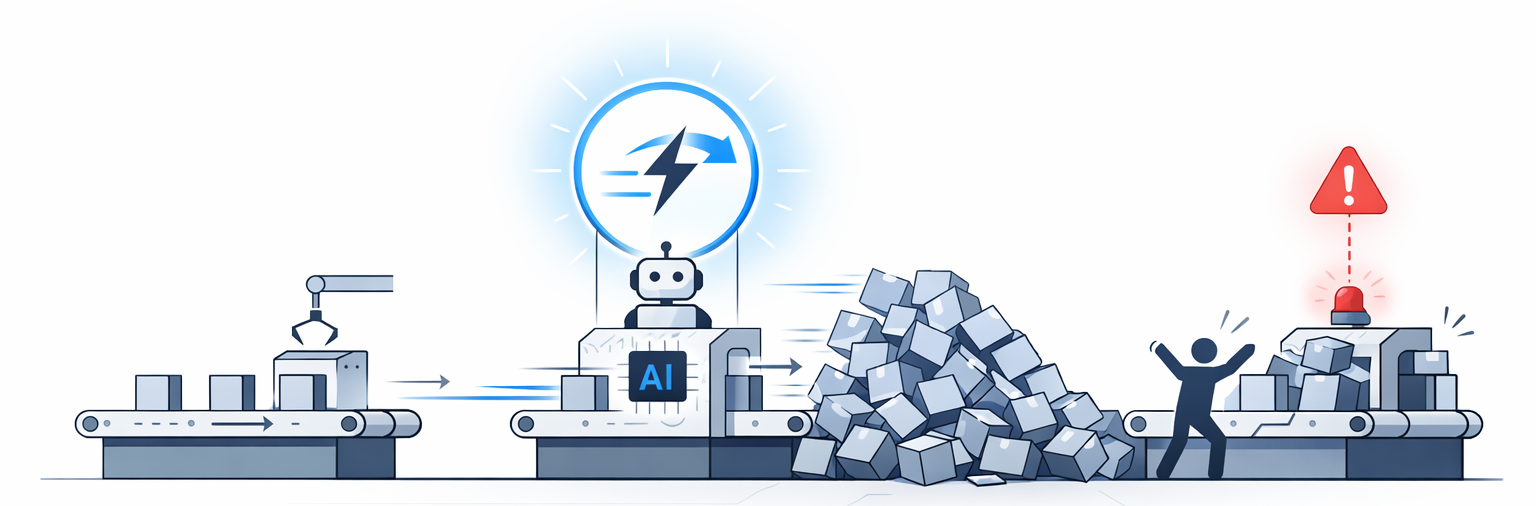

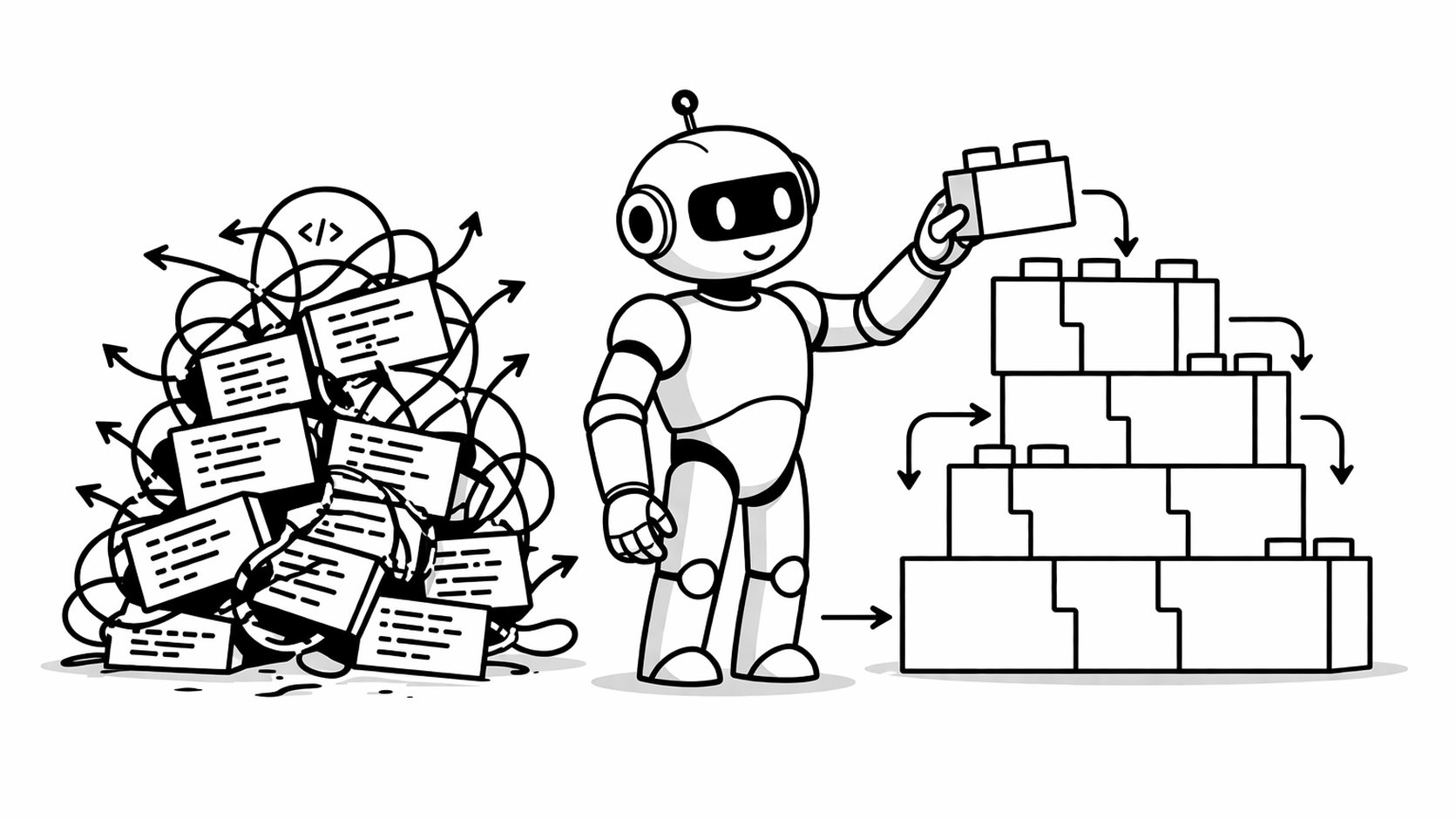

If anything, AI amplifies the need for modular design. We can now generate code at unprecedented speed. That’s a double-edged sword. You can build a well-structured system faster than ever. But poor design decisions will accumulate technical debt at a pace we’ve never seen before.

[Read More]